Don’t Waste Your Testing Team’s Talents – Automate the Repetitive

Many companies shy away from automated testing: it cannot replace manual testing, they reason, and so why invest so much in it? This view can be defended for user interface testing, but it falls short of the reality of web security testing, or better web vulnerability scanning. Read more and learn how an automated web vulnerability scanner can help you get the best out of your web testing and security teams

Your Information will be kept private.

Your Information will be kept private.

Many companies shy away from automated testing: it cannot replace manual testing, they reason, and so why invest so much in it? This view can be defended for user interface testing, where humans are faster and more subtle than automated testing and the testing itself is less arduous than the automation of it.

But it falls short of the reality of web security testing; security requires, above creativity and intuition, cycles consisting of hundreds of repetitive tests. These tests will take humans days if not weeks to complete, but require none of the qualities that humans bring to testing, not to mention that some of the security testing cannot be completed manually.

Automated VS Manual Web Security Scanning

Let's compare two common scenarios. Two companies with similar web applications are getting ready to deploy their first versions. One company decides to invest in automated security testing by using a web vulnerability scanner before the first version is deployed, while the second team wants to save the initial investment and performs only manual testing.

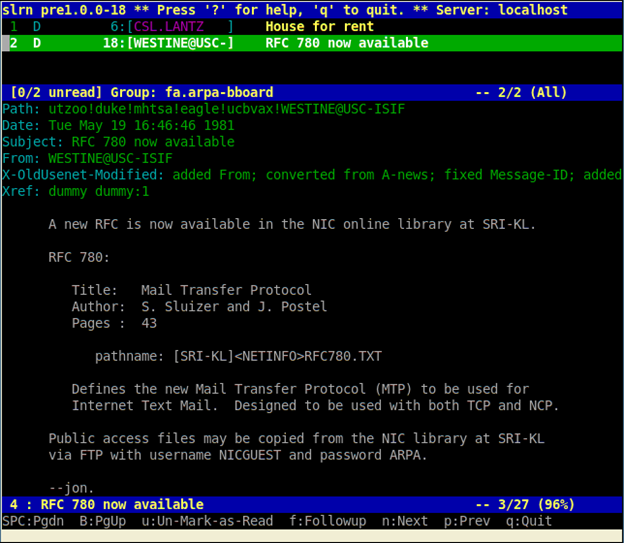

Automating Web Application Security Testing

The first team's automated security testing offers excellent coverage of the web application by performing thousands of tests in a few hours: in a web application with hundreds of possible attack vectors, where the automated web security scanner never skips an input or neglects a field. While the faults discovered by the scanner are fixed (and, in turn, verified by the web security scanner), the testers invest their time in researching and testing logical vulnerabilities, where their intelligence and skill are truly needed. Because they did not have to manually perform the most time-consuming security tests, such as detecting web application vulnerabilities, their schedule is flexible enough to allow additional tests, created as the testers became more familiar with the web application and its logic.

Manual Web Application Security Testing

The second team, meanwhile, wastes days on performing SQL injection tests and struggles to deal with unforeseen events. The tester who was supposed to go over all of the payment forms quits mid-project, the newest tester is slower than the schedule allows for and lacks experience in the security field, and two testers accidentally spend three days performing the same tests. Time runs out before all security testing is performed, and those tests that are performed suffer in quality because of tester fatigue: testers skip inputs, miss fields and cannot develop their testing skills, so that logical vulnerability testing is limited to its original design, rather than utilize the testers' creativity to expand as they become more familiar with the web application.

The First Results

Both companies deploy their web based products and users start signing on for the services. The first team's product offers excellent security, although of course the users are oblivious to this. The second team's product is compromised, but being a young product it has not yet attracted the attention of hackers, and the faults are not found.

Keeping Up with Changes in Web Applications

While preparing for the second version of the web based product, both teams lose a couple of testers. The first team's security automation for the old features is already complete, and a new tester adds automation for the new features; since the web application security scanner itself knows which tests to perform and how, the new tester's inexperience is not a factor. Freed from the task of performing injection and other inclusion tasks, the team has the time to learn and thoroughly test all features, new and old.

The second team is not so lucky: their API tester has quit, leaving only brief manual testing scripts and an assumption that the first version was perfectly tested. While trying to fill the knowledge gap, the second team considers and dismisses the idea of a full security check on their second version. Since they are short on time, they begin to economise. They focus the API security testing on new features, limit their browser testing to the latest version of each browser, and cut corners on input testing - testing only some of the fields, with some of the possible inputs. On top of that, their new testers aren't very knowledgeable in security, and because of the time pressure are given poor instructions and no testing scripts. They test only what they know, leaving whole features open to multiple attack vectors. They deploy a product that has accumulated two versions' worth of security flaws.

As the third version approaches, both teams want to work in short testing iterations that complete a cycle once a week, rather than perform one large cycle every month or two. The first team runs its automated tests every week, and begins to release mini-versions: one or two new features or updates every week. The second team finds that they require two weeks to perform each security cycle, before they can move on to testing features; rapid iterations and weekly releases are impossible, unless they forego security testing.

Worse yet, the second team is now set in its ways and is falling behind the field. Because they work manually, they cannot mimic a malicious hacker's automated work and their view of security risks is distorted by this difference in work methods. They continue testing the same attack vectors in the same manner, while hackers, using ever-improving automated tools, have found new types of security risks they can exploit, and new ways to exploit old risks. The first team's web application security scanner is updated by its vendor's research team; the second team's manual testing keeps looking at the same things in the same way, checking for the same result.

Web Application Vulnerabilities Have No Price

And so it goes, version after version: the first company's automated testing is always faithfully and quickly performed, and always checking for the latest type of web application vulnerabilities, thus heading towards success.

Whereas the second company accumulates testing gaps, with each of its versions offering more security risks. Eventually, the second company's product is hacked, user details are published. The product loses clients, the business gets a bad reputation, hence the future of the business is uncertain.

Automate Web Application Security Testing

You can't, and shouldn't want to, take the human element out of testing; humans are intuitive, creative and natural analysers, and you want them testing where automated tools cannot. But the best way to utilize your testing team's skills is by automating the days' worth of technical, robotic testing on which they're currently being wasted and which no human can perfectly perform. A web application security scanner does what computers do best - running hundreds or thousands of operations in a manner of hours without missing anything - and lets humans do what they do best - solve logical problems.