Scaling-Up and Automating Web Application Security

This blog post summarizes a security talk given by CEO, Ferruh Mavituna, about scaling-up and automating web application security. Ferruh discusses the stages of vulnerability detection, website and vulnerability categories, the benefits and limits of automation, pre and post-scan challenges to automation, and the elimination of false positives.

Your Information will be kept private.

Your Information will be kept private.

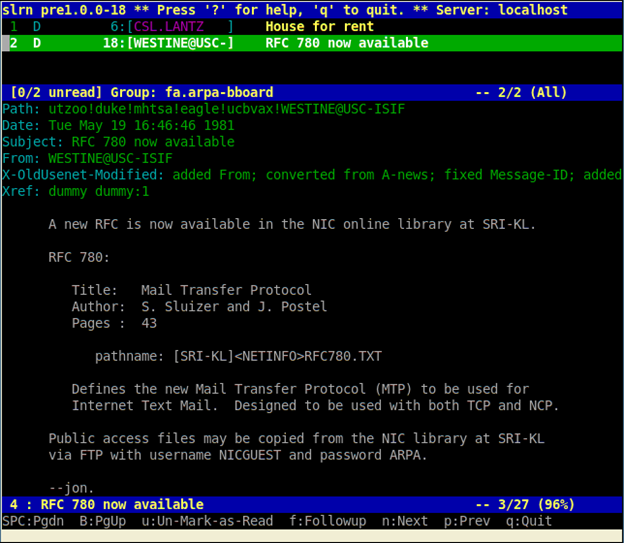

A few years ago, Netsparker CEO Ferruh Mavituna gave a talk at Infosecurity Europe, a major security conference. He spoke about why organizations need to scale their web security operation and how automation can play a key role. Securing web apps manually, requires outsourcing, money, time, and a large team. Automation is the solution.

There are three stages to the vulnerability detection process:

- Discover & Prioritize

- Identify

- Automate

Discovering is a necessary starting point since you need to know your web app inventory. Then you can categorize your websites. Public websites and internal websites score high in terms of mission criticality. Staging websites are important too because the cost of addressing vulnerabilities in production increases over time. Ferruh observed that the longer you leave a bug, the more expensive it becomes to fix.

Establishing internal asset management systems is important. Both processes and policies are required. But you have to know what you’re securing before securing them. If you miss websites and apps, you’ll miss vulnerabilities. Automating application & website discovery – effectively by a form of smart ‘port scanning’ – is now a standard Netsparker feature.

As far as identifying vulnerabilities, what kinds are there? Ferruh mentioned four main categories:

- Configuration issues (at the server rather than the app layer)

- Known vulnerabilities and out-of-date dependencies

- Unknown vulnerabilities (possibly the most serious, since they have been just developed and so have no signature)

- Lack of security best practice and proactive measures (Netsparker now nas a new Best Practice Severity Level)

Ferruh was keen to stress the benefits of automation: scaling, consistency, enforcing checks, finding the majority of vulnerabilities, and eliminating human errors on repeated checks. But he also faced up honestly to the limits of automation. There are logical issues, issues that are extremely design or platform-specific, and issues to do with discovering all the flows and processes in websites.

How can these benefits and limits of automation be reconciled? Ferruh summarized his solution with this rule of thumb.

"Automate What Can be Automated."

Given the limits of automation, it’s only natural to expect challenges in the vulnerability detection process. Before you start a scan or test the security of an app, there are challenges with authenticated scans, URL Rewrites, Custom 404 pages and Form Values. Although Netsparker has solved these problems, it is with the post-scan challenges that Netsparker comes into its own.

Automating the scanning process produces post-scan challenges such as correlating results and hot-patching vulnerabilities in the WAF level. In particular, Ferruh noted that web app firewalls won’t solve the security problem but can serve as a defense, making hacking harder, though not impossible. But false positives are the largest challenge and one that Netsparker has been designed to solve.

- So, thanks to your automated web application security scanner, thousands of issues have been identified, now what?

- How many of the identified vulnerabilities are real?

- What’s the real risk?

- How long would it take to review all vulnerabilities to see which are false positives?

- And what kind of technical expertise do you need to accomplish this?

To answer these questions, Ferruh sets out another principle.

“Automation Without Accuracy Cannot Scale.”

Scaling doesn’t work without automation; automation doesn’t work without accuracy. Accuracy requires the elimination of false positives. Ferruh recounted how he was told at the start of his career in web security that the automated elimination of false positives was impossible. It had to be done manually.

But if you’re told there’s a vulnerability, how do you know it is real? You exploit it. If you get hacked, it’s real. Ferruh designed Netsparker to automated this exploitation process, and to do it safely – without breaking the app or deleting data. This is possible because of Ferruh’s third rule.

“If it’s Exploitable, It Cannot Be a False Positive.”

Now, when developers receive a security report listing vulnerabilities, they have no complaints about the inclusion of false positives. The report security report contains not just the vulnerability name but also all the data they need to deal with it. Before, there was the problem of what Ferruh called “crying wolf syndrome” – real vulnerabilities not getting addressed because of all the previous false positives.

Ferruh concluded his talk on the optimistic note that securing thousands of web applications is possible when organizations use the right tools for the job and plan for the long term. Now, you can know what online assets you have. You can know what automation can be done. An army sized security team is unnecessary. Once you know you have real vulnerabilities, you can immediately prioritize them, assign risks and tasks, and fix them early.