Integer Overflow Errors

An integer overflow happens when a program tries to store an integer value that is too big for the declared integer type. Apart from incorrect results and system instability, it can also cause buffer overflows and provide an entry point for attackers. Let’s see why integer overflow errors are possible and what you can do to prevent them.

Your Information will be kept private.

Stay up to date on web security trends

Your Information will be kept private.

An integer overflow happens when a program tries to store an integer value that is too big for the declared integer type. It is a type of arithmetic overflow error that can not only lead to incorrect results and system instability but also cause buffer overflows and provide an entry point for attackers. Let's see why integer overflow errors are possible, how they can be dangerous, and what you can do to prevent them.

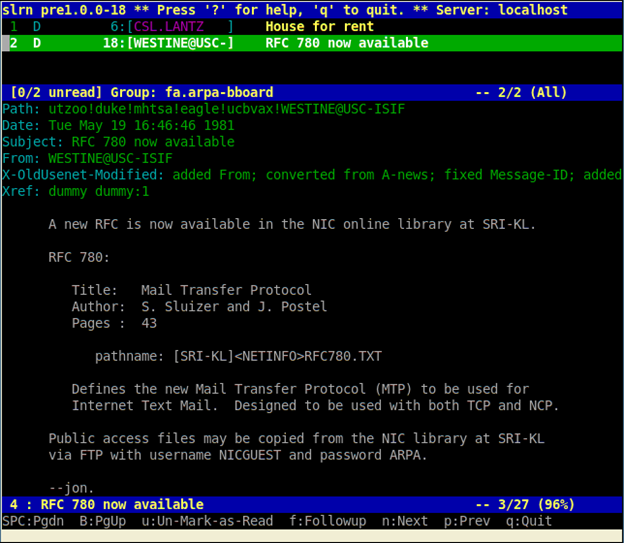

Why Integer Overflows Happen

At the most basic level, an integer overflow occurs when the result of an arithmetic operation needs more bits than the target variable has. For example, the biggest number you can store in a 32-bit unsigned integer variable is 4,294,967,295. In hexadecimal notation, this is0xFFFFFFFF and here you can clearly see that all bytes already have maximum values (i.e. all 32 bits are set). If you have a calculation that gives a larger number, not all bits of the result will fit in the 32 bits available for this data type and you get an overflow.

The behavior in an integer overflow situation depends on the hardware, compiler, and programming language. In most modern systems, the value doesn't actually overflow into adjacent memory bits but is wrapped around or truncated using a modulo operation to fit in the variable.

For unsigned integers, this usually means retaining the least significant bits (for 32 bits, this would be the last 10 digits of the decimal value), in effect wrapping the result around zero. For example, if you have a 32-bit integer (unsigned) with the maximum value and increment it by 42, you might get 4,294,967,295 + 42 = 41. In some specialized hardware, such as signal processors, there is no wraparound or truncation in these cases – the maximum value is simply limited to the biggest representable positive number.

For signed integer types, things get even weirder due to the way negative numbers are represented in binary. Because the leftmost bit of a signed integer is 1 only for negative numbers, when a positive value overflows, it can actually become negative. If a negative value becomes smaller than the minimum value for the current signed type, you get underflow – the negative version of overflow.