How to choose the right web security scanner to reduce false negatives

What are false negatives and why can automated web application security scanners fail to detect a vulnerability? This post explains what false negatives are and what to look for when searching for an automated web vulnerability scanner to ensure that it detects all vulnerabilities without leaving security gaps for malicious attackers to exploit.

Your Information will be kept private.

Your Information will be kept private.

As we have seen in a previous blog post, false positives in web application security have a long-term impact on the security of your web applications and also on the procedures used for web application security. We even have a whole white paper on false positives. But there are also false negatives – web application vulnerabilities that are not detected by an automated vulnerability scanner. False negatives can have a similar long-term impact: your web applications will continue to have vulnerabilities that can be exploited by malicious hackers.

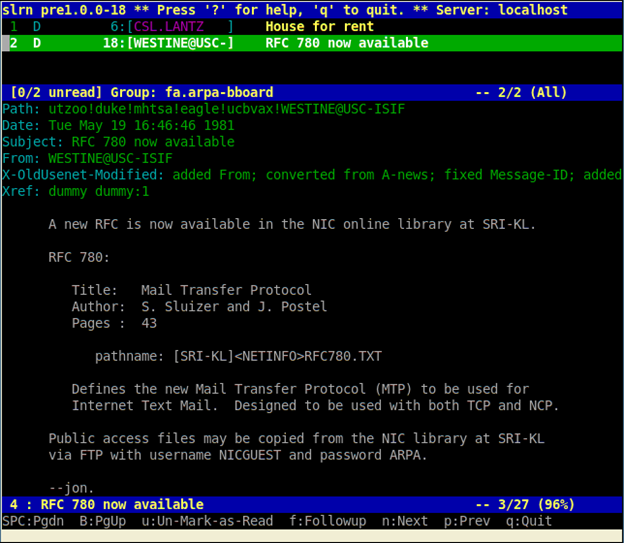

What causes false negatives?

The main reason why an automated web application security scanner does not detect a web vulnerability is because it did not crawl the vulnerable object, so it didn’t know that it was supposed to test it. This type of scanner limitation raises a number of questions, most notably can automated web application security scanners be trusted and should they be used in web application security testing?

Choosing the right web application security scanner

The answer is yes, automated web application security scanners should be used, but you need to do your homework properly when choosing a vulnerability scanner. One of the main features to look for when choosing a web application security scanner for your business is the crawler’s ability to crawl your web applications. Before the scanner starts attacking a web application, the crawler crawls all of the web application components to identify all the inputs and attack surfaces. If an attack surface is not crawled, it will not be scanned for vulnerabilities and if there is a vulnerability in that component, it won’t be reported.

Many of today’s modern web applications use custom 404 error pages, URL rewrite rules for search engine friendly URL’s, and anti-CSRF mechanism to protect them from cross-site request forgery attacks. Although these features make a website more user friendly and secure, they typically hinder the crawler from identifying all attack surfaces.

Some less advanced web application security scanners can be manually configured and tweaked to crawl such web applications, but there is still no guarantee that they will identify all attack surfaces. Configuring crawlers is also very difficult and time-consuming. Unless you are a seasoned penetration tester with years of experience, it is virtually impossible to understand what is happening under the hood of a web scanner. So unless your business can afford a dedicated and experienced penetration tester to maintain the scanner, such solutions are not for you.

The purpose of a web application security scanner

The whole point of investing in an automated web application security scanner is to ease the process of penetration testing and automate it as much as possible, not to spend countless hours tweaking it. A good web application security scanner should support at least 90 to 95 percent of web application technologies out of the box. The best test to see if investing in an automated vulnerability scanner yields a proper return of investment for your business is to simply try several different products on one of your real-life websites or applications and check if they can completely crawl the site without manual tweaking.

Automatically detect web vulnerabilities and avoid false negatives

Netsparker web application security scanner has a crawler that can crawl web applications built with any type of framework. You do not need to configure custom 404 error pages or URL rewrite rules. It heuristically detects them and auto-configures itself to ease the job for you. The crawler in Netsparker also has a built-in AJAX and JavaScript engine that parses, executes and analyses these scripts. Last but not least, Netsparker also supports anti-CSRF tokens to ensure that web applications that use such technologies can still be crawled automatically and all attack surfaces in a web application are identified. For more information about the Netsparker crawler, refer to the Advanced Web Application Security Scanning section.