Facebook & Cambridge Analytica Data Breach

This blog post examines the Facebook and Cambridge Analytica Data Breach news, asks what might change at Facebook and discusses whether users or organisations are responsible. It also examines whether data portability or security is the priority and sets out some basic questions web application vendors need to ask of their data security policies.

Your Information will be kept private.

Your Information will be kept private.

What Happened with Cambridge Analytica?

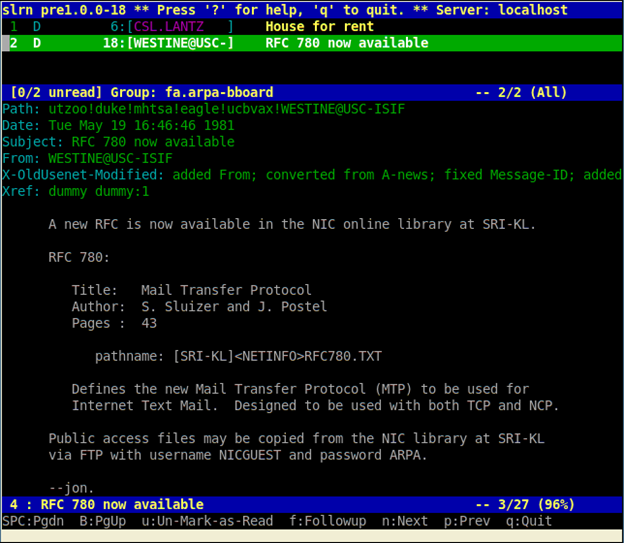

When it was revealed that a company connected to President Trump's 2016 campaign, Cambridge Analytica (CA), had been able to access data from 50 million Facebook accounts, and that Facebook had suspended their (and SCL's) accounts, you may have noticed one of three things, depending on your normal habitat:

- Regular users probably continued accessing their highly convenient web and mobile applications, mostly unconcerned

- The wider technology industry, much better informed, dashed off a flurry of indignant tweets (using the #deletefacebook hashtag) and blog posts about personal security and corporations' lack of adherence to the rules

- But, the web security industry, much more used to thinking and working at scale, sat up and paid close attention

It's difficult to wrap your brain around the numbers, yet if Facebook was a country, its population statistics would lag behind only China and India. This is no small matter.

What's the Back Story?

- The leak of data actually happened in 2015, aeons ago in technology terms. In a recent CNN interview conducted by Laurie Segall, Zuckerberg admitted about the data breach was a mistake that Facebook neglected to inform affected users about at the time. In his CNN interview, Zuckerberg called out a developer, Aleksandr Kogan, who he accused of misusing information he had access to.

- Cambridge Analytica's timeline records that their research partner, GSR, was reported by the Guardian in December of 2015 as having breached Facebook's terms of service and possibly the Data Protection Act. At the time, Facebook asked both GSR and Cambridge Analytica to delete the data.

- Fast forward to March 2017, when CA ran an internal audit to ensure that all GSR data had been deleted, and then informed Facebook. Then in late 2017 and into the early part of this year, the Information Commissioner's Office (ICO) contacted CA to ask for details about how they processed data, including data on US nationals in the UK and alleged work on Brexit. CA reported that they cooperated fully, providing all requested information.

- In March 2018, when Facebook suspended CA and SCL accounts, the full impact hit the public consciousness.

What Will Change at Facebook?

Zuckerberg revealed in the CNN interview that they had over 15,000 people working on security, though he warned security was not something that could be solved 100%. And, he was at some pains to point out that, while users can take advantage of targeted advertisements that are based on coveted demographics, Facebook does not actually sell that data – a common enough accusation levelled at many organisations with a similarly gargantuan user base.

Zuckerberg outlined the remediation activities that would now be put in place:

- Cambridge Analytica would be investigated thoroughly, to see whether they still had access to the data

- Developers would now have more restrictions placed on them

- Those who developed apps for Facebook, who have access to large swathes of data, would also be investigated

- Other suspicious activities would be examined

So far, so good – if staggeringly scant in detail, given the epic size of his security team.

For all such organisations, not just Facebook, we would like to see:

- A copy of a new draft of the user agreement

- The details of a few internal policies on how data is managed: some information on data capture, use, storage, sharing and destruction

- And, ideally even a facility, in the style of the UK's Freedom of Information Act, where individuals (within certain parameters) have the legal right to have a copy of all their data, as held by public sector bodies (which, could be accessed online) – why not?

Are Blithe Consumers at Fault?

Flipping things right around, let's think like a consumer for a second. How many times a week do you willingly hand over data to someone?

- You order your skinny latte and get asked at the end of the purchase whether you want a loyalty card. This may involve completing a form, containing at least your name, address and contact details. Think: where is this form then stored, until it is entered into a database? And, who has access to that database?

- You download a health app to your device of choice and impatiently check the box to confirm that you've read the Terms and Conditions, which you presume includes some mention of how that organization handles your data.

The web security industry is singing from the same hymn sheet at least on this point. Consumers simply cannot have the entire weight of their own data security resting on their shoulders. It is up to application developers and vendors to ensure that what they develop, is, in the first instance, Security by Design (a concept outlined in the EU's new GDPR regulations), takes account of Personal Data, and provides regular updates.

Which Takes Precedence? Data Portability or Data Security?

Data portability is the concept that while data may be collected by one organization for one purpose, it may later be used by the same (or another) organization, for a related, or entirely different, purpose – something we rely on every day. This accepted practice is standard within law enforcement and emergency services, for example, where, in certain extreme circumstances and within defined parameters, organisations can contact the authorities to help them pinpoint and investigate someone who may have expressed violent intentions on a web forum. Yet, on the other hand, while laws abound around data use and security, individuals and organizations, can and do, flout those parameters and regulations with alacrity.

Ask any one of our Security Researchers this question, and the answer on which takes precedence is going to be: Data Security, no doubt.

Yet if we all want the freedom offered by our smartphone apps and organizations want the convenience of sharing data to offer other services to their partner's consumers, we have to be willing to understand that our data is shared – many times with permission we granted years ago and not just in emergency situations.

And, as technology companies, perhaps we need to become much more adept at recognising when an opportunity we present to partners (access to reams of personal data) might just be too good to pass up. The GDPR regulations that all organizations handling data belonging to EU citizens must adhere to, require not only that organizations identify and manage their own and customers' data securely, but that they investigate in detail how their partners, and those with whom they share data, and ensure that they comply with the same regulations. Did Facebook conduct due diligence in this case?

Further, do we, as organisations, have written policies, clear procedures and regular training for staff, contractors, developers and partners, to ensure that everyone who has access to personal and other data knows how it must be handle and follows those procedures? Do we have further procedures for discovering when this is not the case?

Data Security Goes Beyond Automated Vulnerability Scanning

As web applications increase in complexity, vendors have to consider the technical specifications, a robust and functional, yet appealing, UI and code that is free of bugs. One of those specifications often concerns user permissions – who has access to the web application and to what level. When it exposes the web application, its users and data, this can often lead to logical vulnerabilities. One of the most easily recognised types concerns access control. And, the reason that automated scanning tools are unable to detect these vulnerabilities is simply that, while logic and decision making is involved, this is more of a business decision that is made by someone familiar with practices in the the industry and organization in particular, as well as with the web application.

Next month, the EU regulations will require all organizations that handle data belonging to EU citizens adhere to fairly straightforward rules concerning who has access to the Personal Data we acquire from individuals and manage on their behalf. Organisations need to be able to answer the most simple of questions:

- Who does this data belong to? And, who should have access to it?

- Can we justify retaining it? And, for how long? What data retention process is in place to check that when data has served its usefulness, it is automatically placed on a disposal schedule?

- Are we due to share it with other organizations, and do we have the owner's express permission to do so? And, can we demonstrate that those organizations in turn have similar access control, disposal and other security policies?

- Do we know who currently has access to the data, both inside and outside our organization? If there are gaps in our security, have we put in place policies, permissions and checks to ensure that what we intended is what is actually happening?

Mark Zuckerberg announced that he was willing to testify in any U.S. government inquiry into the reported breach. He has also stated that he was not opposed to regulation of his social media company and has recognised that Facebook needed to be more publicly accountable. Many might add that such organisations should know the details of what partners and developers are doing on your behalf. In light of this latest breach, along with the infamous Equifax, Apache and Grammarly incidents, let's hope none of us are in the unenviable position of all organisations involved – realising what damage such an epic breach can do to our brand, not to state the unknown, individual consequences of the breach of all types of personal data.