How We Found & Exploited a Layer 7 DoS Attack on FogBugz

This article examines how the specific application behaviour we reported finding in Fogbugz early in July 2017 was manipulated to overload systems leading to a DoS situation. Testing for this vulnerability involved checking HTTP status codes, response size and timing.

Your Information will be kept private.

Your Information will be kept private.

Modern-day Denial of Service (DoS) attacks cause much consternation in the web security industry because they are so inexpensive, easy… and devastating! While the cost of conducting such attacks decreases by the day, the damage caused to target systems escalates with each attack.

Attacks that capture the attention of the mass media use an army of infected devices to generate a massive amount of network traffic in order to take down target systems. They are typically low complexity network attacks. The objective is to render the system unusable for legitimate users. However, not all application layer Denial of Service (DoS) attacks are the same. Though many often aim to generate a very large amount of network traffic, sometimes it is enough to make only a few requests to achieve the desired effect.

In this article, I explain how specific application behavior I encountered in FogBugz (a web-based project management tool) might easily be used to overload a system. Netsparker web application security scanner reported finding this issue in the latest version of Fogbugz, early in July 2017.

What to Check to Determine Whether a DoS Vulnerability Existed

The first indicator to check is HTTP status codes. This does not mean, though, that there is a problem every time we do not see ‘200 OK‘ (the standard response for successful HTTP requests). It will become clear how this is useful to us in the example in the next section.

The second indicator is response size. If the database queries are not sufficiently controlled, when an unexpected situation occurs, the response size can get out of control.

Timing is the third important indicator. If the response to a request, that you’ve already checked, takes an unusually long time, then this is probably the right place to test for DoS.

How We Determined Fogbugz was Vulnerable to DoS

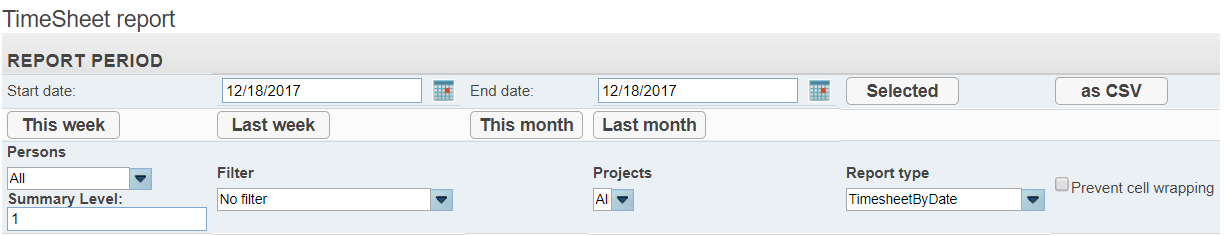

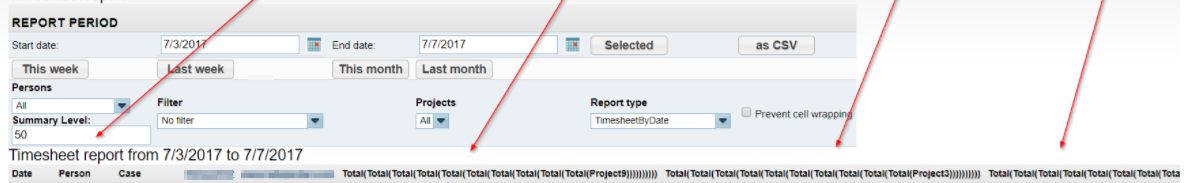

As with most project management tools, Fogbugz (now known by its new name, FogBugz) has functionality that allows users to create timesheets for tracking their working time. Users can examine activities on specific dates by filtering, as shown.

Once the filters are selected, the application makes a POST request and fetches the relevant records from the database. Every single user input is carefully sanitized to protect against attacks such as SQL injection and cross-site scripting. Initially, everything might seem to be normal, but there is a small detail that can easily be overlooked.

Let’s take a closer look at the Summary Level parameter and what it actually does. The default value of this parameter is ‘1’. When we send the request with this value, column headers (Date, Person, Case and Total) are displayed.

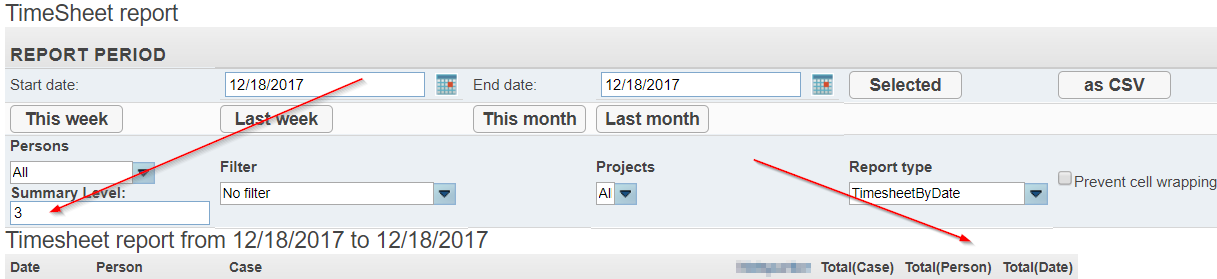

By entering higher numbers, we can produce more detailed tables. For example, when I entered ‘3’, the application added two further columns.

You may not yet have noticed the problem, but it will become clearer when we enter a higher number and look closer at how the column names change.

There are no limit checks, such as 1< x <10, for the entered value. Therefore, an infinite loop occurs when we use large numbers for the parameter. To test this I entered 20,000, and all the browsers I tested this on crashed because the response size was too large.

The advanced tools that web browsers offer for developers may cater for many things, but they may not be enough at this point.

Checking Response Size and HTTP Status Codes With Curl

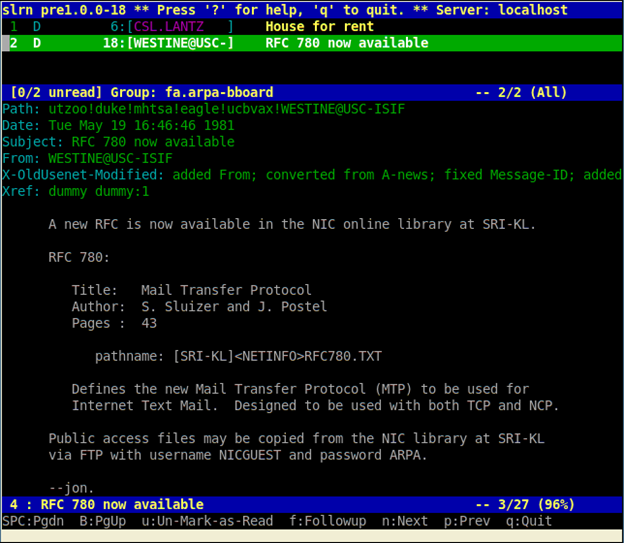

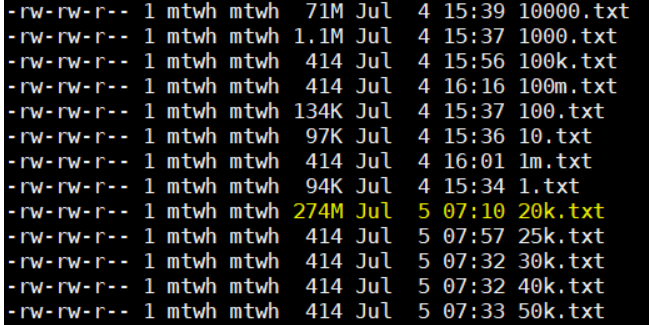

When I encounter browser crashes caused by similar problems, my preferred, preventative solution is Curl. Curl is a Linux utility that allows you to easily issue HTTP requests from the command line. You can simply send the request and view the response details.

In the screenshot, you can see the value of the Summary Level parameter I sent on the right hand side. The highlighted section (fifth row from the bottom) shows a value of 20,000. In this case, the response size grew to 274 megabytes.

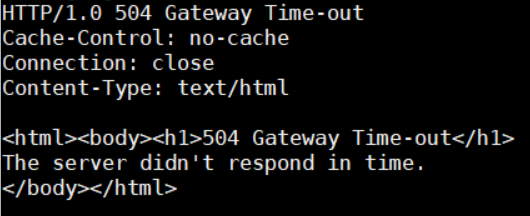

So, we are able to increase the response size up to 274MB from 94K, per request. When we use values above 20,000, this will very quickly result in a 504 Gateway Timeout.

Conducting Timing Tests With Curl

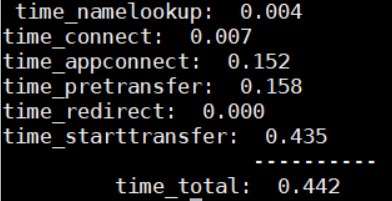

Curl also provides other timing details, such as time_namelookup, time_connect, and time_starttransfer. Another tip is that it allows users to use formatted output templates.

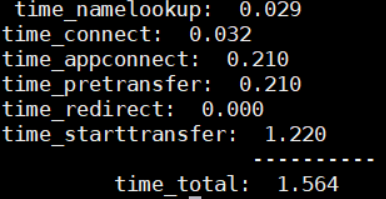

Here are the timing details provided when I used a default value (1) for the Summary Level parameter.

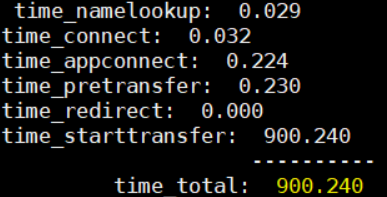

This was the response time for the request when the Summary Level parameter was set to 1000.

This was the response time for the request when the Summary Level parameter was set to 100,000.

The tests revealed that I was able to increase the response time up to 600 times per request. It is possible to do same thing for the response length.

Overlooking Input Checks Can Lead to DoS on the Application Layer

As we have demonstrated in the Fogbugz example, if attackers can make the server generate a huge amount of data using a relatively small request, this can lead to a DoS. Using a simple script with multiple threads support, the attacker can amplify this effect and eventually make the server unresponsive for all legitimate users. Also, in this case, the maximum size of the response that the server could handle was 274 MB. It is possible to create a huge response by sending a small request. If the necessary checks, like limiting parameter 1<x<10, were properly made following the user inputs, we would not have been able to produce such a huge response size without large parameter values.

In this case, checking for these items would have prevented the vulnerability that lead to the DoS.